Written by Jordan Richard Schoenherr, Assistant Professor, Psychology, Concordia University

Along with the recent reciprocal drone strikes by Iran and the United States in Syria, Russia continues to unleash its arsenal on Ukrainian civilian and military targets alike. While the Russian armies have started using outdated weapons, novel technologies remain the objects of fascination on the battlefield.

Hypersonic missiles and nuclear weapons have understandably grabbed media attention. However, drone warfare continues to occupy a central role in the conflict.

Ukraine’s not the only battlefield. Drone warfare has played a significant role in the Azerbaijan-Armenian conflict, with Armenia’s superior conventional forces being challenged by the kamikaze drones, strike UAVs and remotely controlled planes of Azerbaijan. A world away, China’s drones continue to test Taiwan’s defensive capabilities and readiness.

Drones are not the only weapons. As the global Summit on Responsible AI in the Military Domain (REAIM) illustrates, there is a growing recognition that lethal autonomous weapons systems (LAWS) pose a threat that must be reckoned with.

Understanding this threat requires grasping the psychological, social and technological challenges they present.

Killing and psychological distance

Psychology is at the heart of all conflicts. Whether in terms of perceived existential or territorial threats, individuals band together in groups to make gains or avoid losses.

A reluctance to kill stems from our perceived humanity and membership in the same community. By turning people into statistics and dehumanizing them, we further dull our moral sense.

LAWS remove us from the battlefield. As we distance ourselves from human suffering, lethal decisions become easier. Research has demonstrated that distance is also associated with more antisocial behaviours. When viewing potential targets from drone-like perspectives, people become morally disengaged.

(AP Photo/Natacha Pisarenko)

Autonomy, intelligence differ

Many modern weapons rely on artificial intelligence (AI), but not all forms of AI are autonomous. Autonomy and intelligence are two distinct characteristics.

Autonomy has to do with control over specific operations. A weapons system might gather information and identify a target autonomously, while firing decisions are left to human operators. Alternatively, a human operator might decide on a target, releasing a self-guided weapon to target and detonate autonomously.

Autonomous weapons are not new to warfare. In the sea, self-propelled torpedos and naval mines have been in use since the mid-1800s. On the ground, elementary land mines have given way to autonomous turrets.

In the air, Nazi Germany wielded V1 and V2 rockets and radio-controlled munitions. Heat-seeking and laser-guided precisions followed by the 1960s and were used by the U.S. in Vietnam. By the 1990s, the era of “smart weapons” was upon us, bringing with it questions of our ethical obligations.

Contemporary LAWS have been framed as the “natural evolutionary path” of warfare. We can draw parallels between single drones and smart weapons, although drone swarms represent a new kind of weapon.

(Shutterstock)

The ability to co-ordinate their actions gives them the potential to overwhelm human forces. The degree of co-ordination, in fact, requires a higher level of autonomy. If the swarm is sufficiently large, a single human operator could not hope to maintain sufficient situational awareness to control it.

By ceding lethal decisions to LAWS, their accuracy and reliability become paramount concerns.

Accuracy and accountability

The potential for reduced human error is often used to recommend LAWS. While an actuarial approach to AI ethics is hardly the best or only way to make moral decisions about AI, reliable data is essential to judge the accuracy and improve the operations of LAWS. However, it is often lacking.

A review of U.S. drone strikes over a 15-year period suggested that only about 20 per cent of more than 700 strikes were acknowledged by the government, with an estimated 400 civilian casualties.

In the early stages of the Russian invasion of Ukraine, reports suggested that Ukrainians destroyed about 85 per cent of the drones launched against them. In the most recent attacks, they have destroyed more than 75 per cent of the drones.

These statistics might suggest drones aren’t particularly effective. But the minimal cost and large numbers of drones mean that even if a small proportion of the weapons are successful, the damage and casualties can be significant.

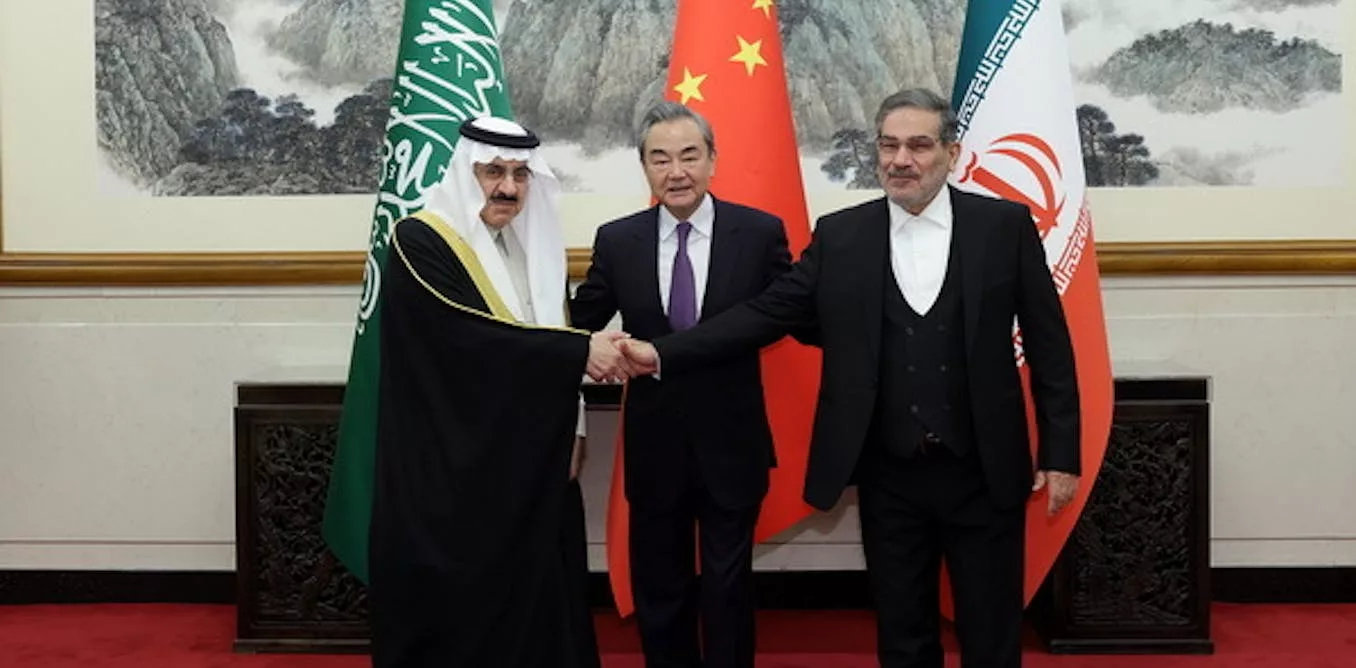

When assigning responsibility, we have to consider who manufactures these weapons. They are not always homegrown. Drones used by Russia in the Ukrainian conflict hail from China and Iran.

Inside these drones, many parts come from western manufacturers. Understanding responsibility and accountability in conflicts requires that we consider the international supply chains that enable LAWS.

Can LAWS be outlawed?

Whether LAWS represent a unique threat to human rights that must be banned — like landmines — or otherwise controlled by international laws, there is widespread agreement that we must re-evaluate existing approaches to regulation.

REAIM’s work is not alone in attempting to regulate LAWS. The United Nations, multilateral proposals and countries like the U.S. and Canada have all developed, proposed or are reviewing the sufficiency of existing standards.

(AP Photo/Evgeniy Maloletka)

These efforts face an array of practical issues. In many cases, the principles are framed as best practices and viewed as voluntary rather than being enforceable. States might also be reluctant in order to ensure consistency with their allies.

Treaties and regulations also create social dilemmas — just as they do when contemplating cyberweapons, nations must decide whether they adhere to the rules while others develop superior capabilities.

Even if LAWS are wholly or partially banned, there is still considerable room for interpretation and rationalization.

In July 2022, Russia said responsibility lies with their operators and that LAWS can “reduce the risk of intentional strikes against civilians and civilian facilities” and support “missions of maintaining or restoring international peace and security … [in] compliance with international law.” These are hollow statements made by a hollow regime.

No matter how elegant the regulatory framework nor how straightforward the principles, adversarial nations are unlikely to abide by international agreements — especially knowing weapons like drones make it easier for soldiers psychologically removed from the realities of the battlefield to kill others.

As Russia’s war in Ukraine illustrates, by reframing conflicts, the use of LAWS can always be justified. Their ability to desensitize their users from the act of killing, however, must not be.

This article is republished from The Conversation under a Creative Commons license. Read the original article.